Crawl optimisation is the process of making a website more accessible to search engines so they can index the site more effectively. The result is a website that is better able to rank in search engine results pages (SERPs) for relevant keywords. To increase traffic and improve ROI for your business, talk to Supple about crawl optimisation and other on-page SEO strategies.

Find Out What Your Website is Doing Right and Wrong – Get Your Free SEO Audit Today!

Complete Guide to Optimizing Crawl Budget for SEO?

The internet is too vast. There are more than 1.9 billion live websites and trillions of pages floating on the World Wide Web.

With such a huge pool of information resources to analyse, picking the accurate content to solve the searcher’s query is a demanding task for search engines.

In addition, search engines do not have infinite time and resources to continuously check all the pages available on the internet. So they scan a limited number of pages from websites and that is referred to as crawl budget.

Read on to understand what is crawl budget, why it matters, and how you can optimise it for SEO.

OUR SEO PROCESS

The First Page of Google!

Research

In this phase, our SEO consultants will work with you to understand your business and define the goals of our SEO campaign. We will perform competitor research and search intent analysis before identifying the keywords that we think you should target.

Audit

Our comprehensive SEO audit looks at everything from content gap analysis and internal links to site architecture, backlink profiles, technical SEO, and crawl optimisation. Our team will identify all the growth opportunities based on your current website and SEO performance.

Strategy

In the strategy phase, we evaluate the findings from our audit and prioritise SEO tasks. We have an Impact / Effort / Action Priority Matrix that we follow to prioritise tasks. Our SEO specialists create an action plan for your SEO campaign, which includes a timeline with key milestones we have to achieve.

Implementation

Our SEO team, designers and developers work with you to start actioning the high priority tasks from the previous phase. Following this, we get onto the low priority tasks. This process allows us to knock out some early quick wins, then have a strategy in place to tackle the high effort tasks. We keep the low rewards tasks on the back burner and tackle them when we get time as the campaign progresses.

Reporting

We have systems and processes in place to make sure that we track and report on your SEO campaign.

As you can imagine, this is not the end. Our SEO consultants are constantly analysing and tweaking your campaign to achieve the best possible results on the 1st page of Google. It is an ongoing process, and we are with you for the long term!

Research

In this phase, our SEO consultants will work with you to understand your business and define the goals of our SEO campaign. We will perform competitor research and search intent analysis before identifying the keywords that we think you should target.

Audit

Our comprehensive SEO audit looks at everything from content gap analysis and internal links to site architecture, backlink profiles, technical SEO, and crawl optimisation. Our team will identify all the growth opportunities based on your current website and SEO performance.

Strategy

In the strategy phase, we evaluate the findings from our audit and prioritise SEO tasks. We have an Impact / Effort / Action Priority Matrix that we follow to prioritise tasks. Our SEO specialists create an action plan for your SEO campaign, which includes a timeline with key milestones we have to achieve.

Implementation

Our SEO team, designers and developers work with you to start actioning the high priority tasks from the previous phase. Following this, we get onto the low priority tasks. This process allows us to knock out some early quick wins, then have a strategy in place to tackle the high effort tasks. We keep the low rewards tasks on the back burner and tackle them when we get time as the campaign progresses.

Reporting

We have systems and processes in place to make sure that we track and report on your SEO campaign.

As you can imagine, this is not the end. Our SEO consultants are constantly analysing and tweaking your campaign to achieve the best possible results on the 1st page of Google. It is an ongoing process, and we are with you for the long term!

What is SEO Crawl Budget?

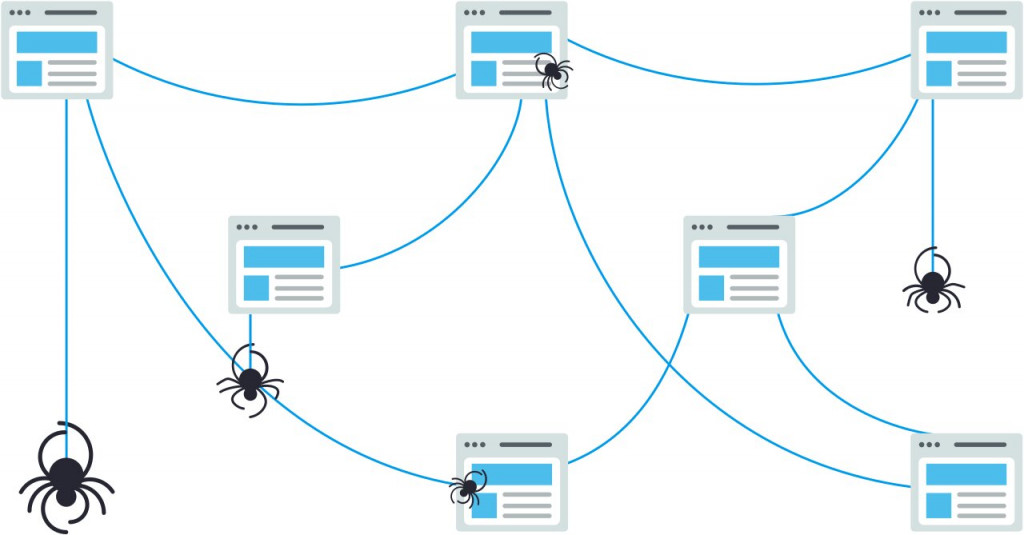

To start with, search engines deploy a team of bots — also known as crawlers or spiders — that scan through the internet to find new and updated content. This process is called crawling.

Once crawling is done, search engines index the web pages and rank them in the order of relevance. In other words, the page that best answers the searcher’s query according to the search engine ranks on top and so on.

Considering the fact that a huge portion of organic traffic goes to the first page of search results, you would want to make the best of your search engine resource like crawl budget.

NOTE: with the new test in the US where Google does continuous scrolling for up to 4 pages, this trend will change in the future.

The Google webmaster blog defines crawl budget as:

“Taking crawl rate and crawl demand together we define crawl budget as the number of URLs Googlebot can and wants to crawl.”

In simple words, the crawl budget is how many pages the search engine can crawl on your website within a certain time span. As soon as your crawl budget is used up, the search engine will stop scanning your site and move to the next one.

Case Studies

Return on Investment for business owners.

Why Does Crawl Budget Matter in SEO?

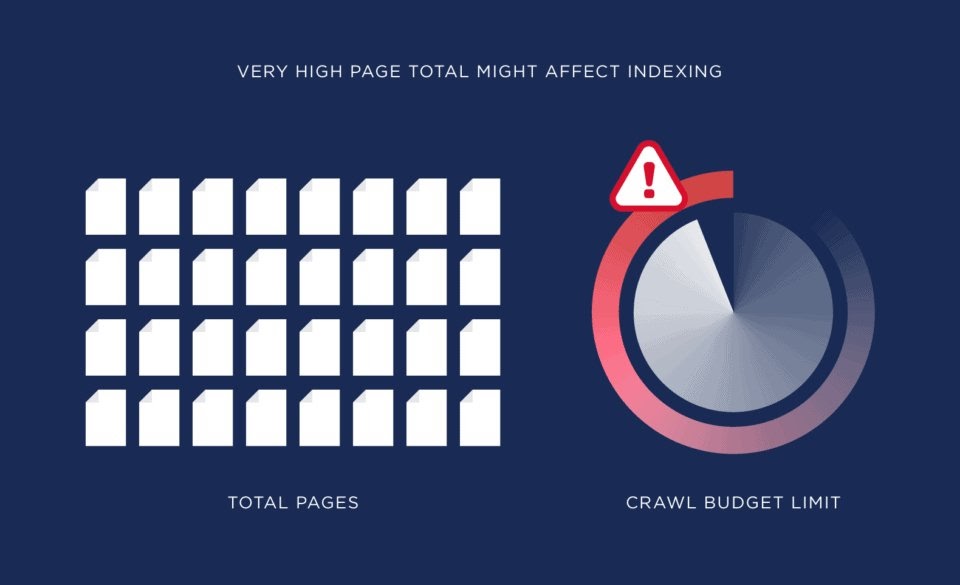

Ranking your website or web pages higher in the search results is the primary reason you perform SEO. But if the number of pages on your website exceeds your site’s crawl budget, then the remaining pages stay unindexed. Hence, they won’t rank and appear in search results.

This is why you want the search engines to find and understand the maximum pages that you want to rank for in the minimum time. When you add the new pages on your site, the sooner they get indexed, the earlier you can get exposure to those pages and start benefiting from them.

Having said that, you only need to worry about it if you have a very large website with 1000s of pages. This usually happens when you are running an large eCommerce store or a large publisher site.

However, you would still want to use your crawl budget wisely and make the best of it rather than wasting it on pages you don’t want to be discovered.

Client Testimonials

about Our Digital Marketing Services.

- 428 Google Reviews

- 4.9

Extremely reliable and super friendly staff. Jani and Sam helped us with our digital strategy. Would recommend to everyone who wants to get their SEO done.

Mark, Ron and Param from have been incredible to work with. From the very beginning, they took the time to understand our business goals and crafted a clear SEO strategy that is working really well.

Mark and Jani are fantastic to deal with and business has never been better

We are super impressed with this SEO team. They’re honest, transparent, and really know their stuff. They made everything easy to understand and were great to work with from the start. We saw our targeted keywords hit page one with half of them in the #1 spot, and organic traffic is up by nearly 20%. We recommend Supple for fast and high quality results.

I have to speak very highly of the crew of Supple Digital, Mark, Jani and Abbas do an amazing job of my website. Very highly professional and knowledgeable of their products and services. If you are in need of a good website go to these guys

View More Reviews

How Does Crawl Budget Work?

Crawl budgets vary for different websites and it’s assigned automatically by search engines.

For instance, Google allocates crawl budget to your site considering the factors such as:

- Size of the website: If your site is bigger, you’ll need more crawl budget.

- Frequency of updates: Google prioritises crawling the website that updates its content on a regular basis.

- Link structure: Internal linking on your website also helps Google understand your site better.

- Server performance: Your website’s speed and load time can also affect your crawl budget.

With that said, let’s take a look at a few important concepts that will help you understand how the crawl budget works.

Crawl Rate Limit

The search engines don’t want to hinder the experience of your site visitors by demanding too many server resources by frequent crawling. Hence, they control their visits to your website by setting a crawl rate limit.

Let’s say, Googlebot crawled your website. Now, based on how your server responds, it increases or reduces your crawl rate limit. If the server is responding quickly without errors, Google may raise the limit. On the other hand, if it finds that the server is hosting multiple sites or returning errors, it lowers the crawl rate limit.

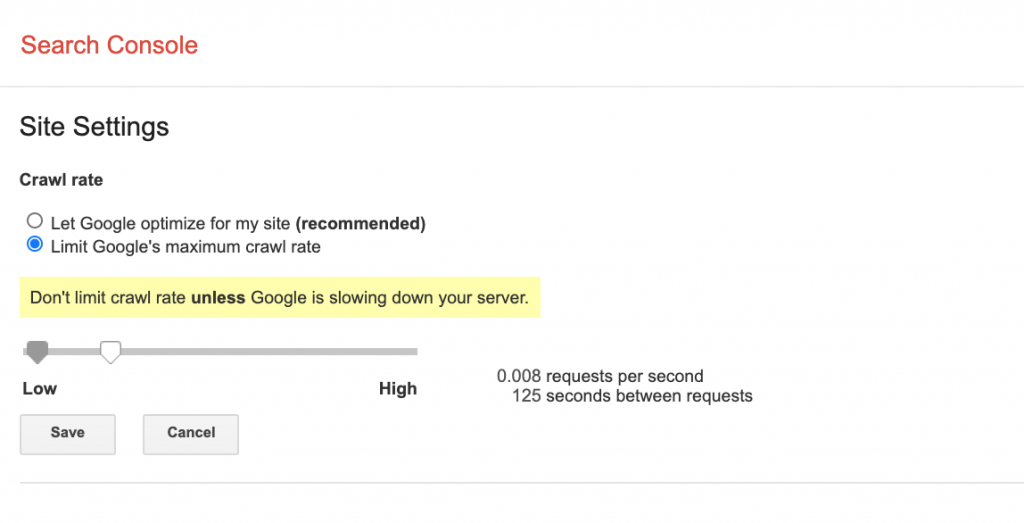

As a website owner, you can also set this limit in the crawl rate settings section of the Google search console.

Thus, the crawl rate limit is a key contributor to your crawl budget.

Crawl Demand

The crawl demand or crawl scheduling is determined by the worthiness of re-crawling the pages on your website. The factors that influence the crawl demand are:

- Popularity: The number of inbound links on a page and the search queries it is ranking for.

- Recency: The URLs that are updated regularly enjoy more crawl demand from search engines.

- Type of page: The more your page is likely to change, the higher crawl demand it has. For example, product pages change more frequently than policy pages.

SEO Team Lead

company's online marketing goals.

- Bishal Shrestha

- Head of SEO (9+ Years Experience)

Bishal Shrestha is an innovative SEO leader and digital strategist with over 9 years of expertise in driving organic growth for businesses across diverse industries. Bishal combines technical proficiency with strategic vision, excelling in data-driven decision making and delivering measurable results. He specialises in technical SEO optimisation, advanced analytics, and scalable growth strategies.

Bishal brings a unique blend of software engineering background and marketing acumen to his role at Supple Digital. With certifications in Google AdWords and Google Analytics, he leads comprehensive SEO campaigns that consistently elevate brands in competitive digital landscapes. His holistic approach focuses on sustainable, long-term growth through innovative solutions.

How to Track the Crawl Budget for Your Website?

As compared to other search engines, Google has better transparency when it comes to revealing the crawl budget for your website.

Below we have discussed the two most common ways you can track the crawl budget of your website.

Track in Google Search Console

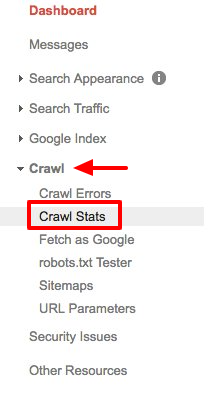

You can get insights into your website’s crawl budget for Google search engine if you have verified your site with Google Search Console. Here’s how you can check it step-by-step:

- Log into Google Search Console and select your site.

- Click crawl and then go to crawl stats in the drop-down menu.

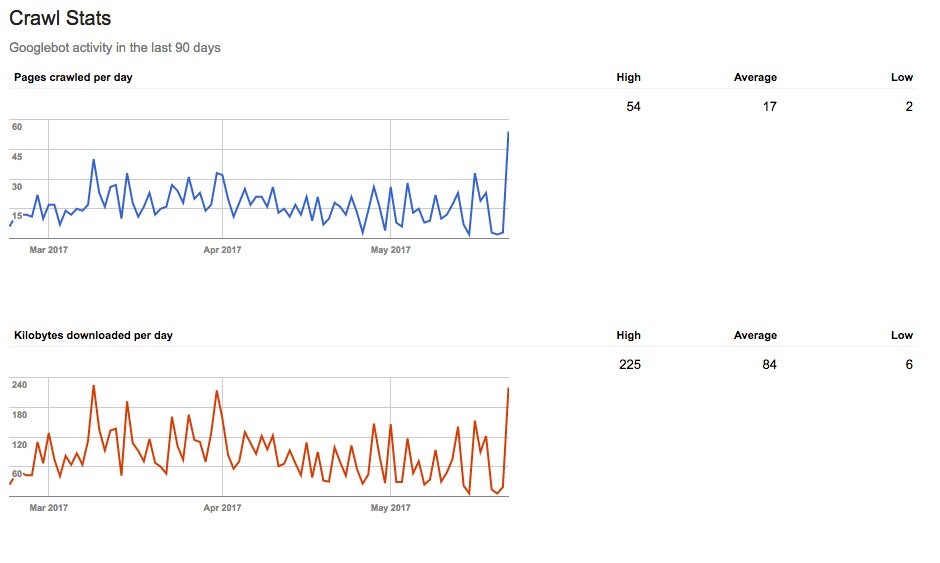

Now, you’ll be able to see your crawl stats report. It may look like this:

We can see that Googlebot has crawled an average of 17 pages a day in the last 90 days for one of our test website. So the average crawl budget for this site is 17 pages.

Check Server Logs

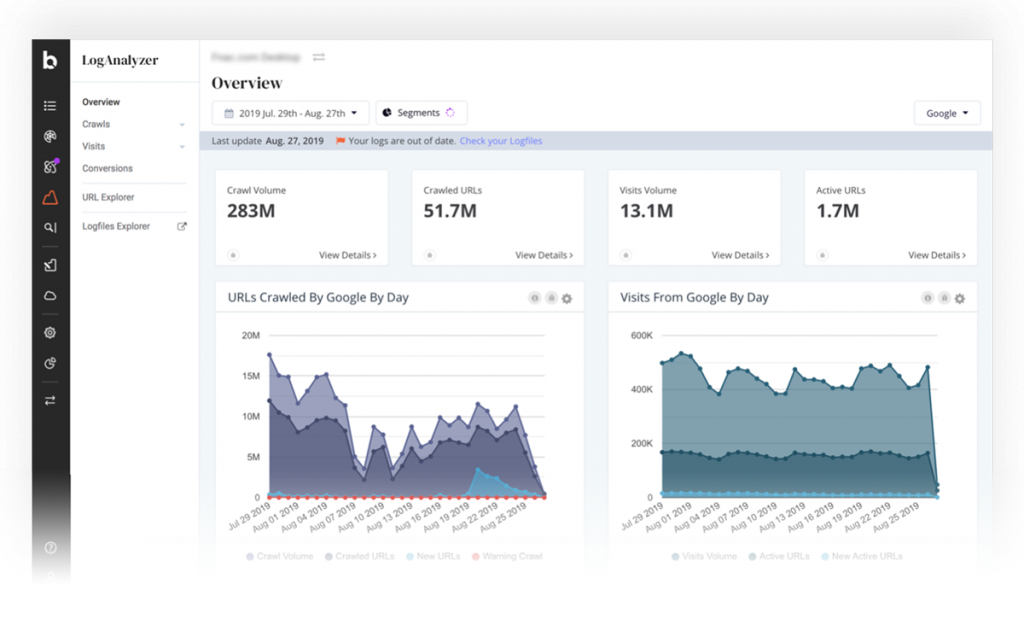

Your server registers the logs of the events that happen on your website and constantly produces the log files. So every time a user or Googlebot visits your site, your server logs an entry into the access file.

You can check it manually in your system or you can use professional log analyser tools that provide you data in an organised way so that it makes more commercial sense to you.

Thus, you can interpret the server log reports and track your crawl budget activities for the given period.

Popular Articles

Our Blog Is A One-Stop-Shop for Free Advice and

Comprehensive Guides

The Supple team publishes new articles, case studies, and guides all the time. Learn more about digital marketing with our experts.

Factors That Negatively Impact Crawl Budget

There can be many elements that might be affecting your crawl budget adversely. You can conduct an SEO audit to highlight such red flags for your website.

Meanwhile, let’s discuss a few of the factors that can waste your crawl budget.

Duplicate Content

Having the very similar or exact same content on various pages of your website is referred to as duplicate content. Sometimes the content may get duplicated unintentionally or due to technical errors.

Whatever the reason, duplicate content hurts your overall SEO performance as it confuses the search engines. Moreover, it doesn’t add any value to your visitors.

Talking of crawl budget, the search engine crawls through many URLs on your site but finds the same content on multiple pages. Thus, your budget is wasted on the same resources without getting any indexing or ranking benefits.

So if you don’t want crawlers to spend time on duplicate pages, you can:

- Use canonical tags: It helps the search engines identify the original version of the content.

- Organise your content in cluster topics: Helps you in your content strategy to prevent duplication.

Moreover, ensure that you follow all the other required processes to avoid duplicate content on your site.

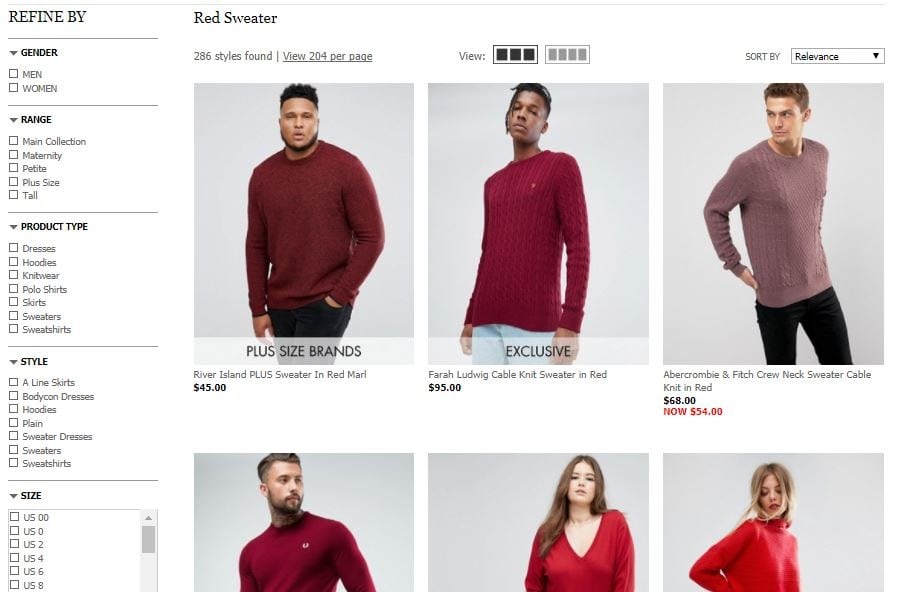

Faceted Navigation

Faceted navigation or faceted search refers to an in-page navigation system. Okay, let’s simplify it. When you shop online, the eCommerce site allows you to navigate and filter within the searched products, that’s faceted navigation.

While it makes shoppers’ life easy, it gives hard time to search engines. Because faceted navigation creates countless combinations for the same URL, it wastes the crawl budget on extracted content from the original page. It may also cause other issues like:

- Duplicate content

- Index bloat: Search engines index pages that don’t have any search value

- PageRank dilution: The extracted pages divide the PageRank passed to your important pages.

We understand that it may not be possible to avoid faceted search if you’re running an eCommerce store, job portal, or travel booking site etc. However, you can always ensure that you fix the issues caused by faceted navigation.

Soft 404 Errors

Usually, you display the normal 404 error to inform your visitors about a page that is no longer available and you may also customise that page to aid their navigation.

However, in case of a soft 404 error, the page is not found. But the server returns the 200 OK code instead of the 404 page not found error. This misleads search engines by telling them that the page is valid.

Hence, search engines continue to use your crawl budget on such pages. Moreover, they also list these pages in search results which creates a negative user experience for your visitors.

Hacked Pages

Though it’s unfortunate, sometimes your site or some web pages may get hacked by cyber attacks. Since Google aims at providing the best search results to its users, once it detects such pages, it stops crawling and indexing them.

However, these pages may still eat into your crawl budget. So, it’s recommended that you remove them from your website and serve a 404 page not found response code.

Redirect Chains

When you set a link to a page that redirects to some other page on your site, crawlers take it as two separate URLs and crawl accordingly. This means you’re using extra crawl budget for scanning a single URL.

Now, if you have too many redirects or chains of redirect links on your website, it can use up a lot of your crawl budget without crawling many unique URLs.

Awards and Recognition

How to Optimise Crawl Budget for SEO?

Besides fixing the above issues that waste your crawl budget, here are a few crawl budget optimisation tactics you can follow.

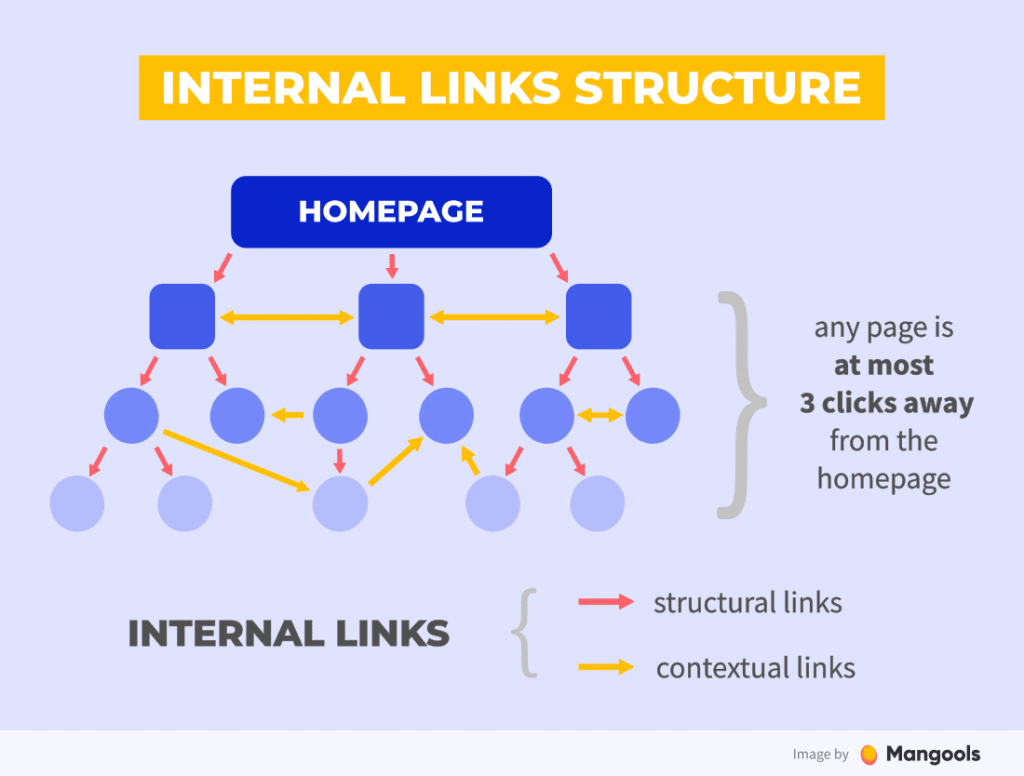

Improve Your Internal Linking

Search engines prioritise crawling and indexing the most valuable pages of a website. One of the methods they use to identify such pages is through backlinks — external sites linking to your pages — and internal links on your web pages.

Though backlinks carry more importance, it’s not always in your control. But internal linking is completely in your control.

Hence, you must optimise your internal linking practices. For this, ensure that:

- The most important pages have the highest number of internal links from other pages of your website

- All valuable pages are linked from the homepage of your site

- All the pages on your website have at least one page linking to them

- Perform internal link audit at regular intervals or at least once a year

Build SEO-friendly Website Structure

A simple website structure is ideal for SEO because search engine crawlers start crawling from the homepage and then visit other linked pages. Hence, the straightforward site architecture makes the crawling process easier and faster which optimises your crawl budget.

Hence, consider having a flat site structure that helps crawlers to reach any page on your site in less than four clicks. Moreover, it also enables easy navigation for your users.

Create Robots.txt Files

You can create Robot.txt files to guide the search engine bots on how to crawl various pages on your website. All major search engines like Google, Bing, and Yahoo recognise and follow the instructions of Robot.txt files.

With Robot.txt files, you can block the crawling of unimportant pages on your website. This lets search engine bots spend more time on your valuable resources, index them and make the most of your crawl budget.

Enhance Your Website Link Profile

Search engines prefer crawling popular websites more often as they want to index fresh and valuable content. They also allocate a higher crawl budget for such sites.

Furthermore, web crawlers use the backlink profile of the websites to differentiate between the more reputable and less trustworthy websites. It’s one of the most fundamental SEO concepts that impact your site authority, credibility, and rankings.

So if you want to optimise your crawl budget and overall SEO performance, make sure that you get quality backlinks to your website. Remember, quality and quantity both matter when it comes to building your backlink profile.

Clear out Thin Content

As discussed earlier search engines’ primary focus is to serve the searchers with the best and valuable information out there. Just like duplicate content, thin or low-quality content doesn’t add any value to your users and SEO performance alike.

First, you need to find the pages with:

- Little or no text content

- Duplicate content

- Content that was published many months back and doesn’t attract any traffic

- Auto-generated content, etc.

Now, remove the unnecessary pages, block their crawling with Robot.txt files, and update the old content. This will help you maximise your crawl budget.

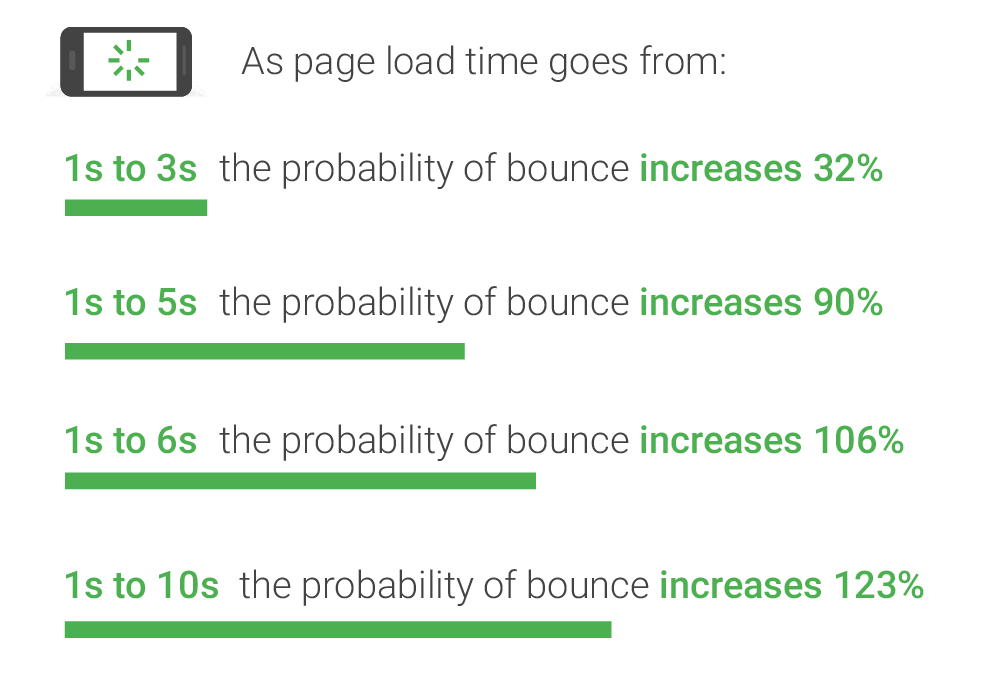

Speed up Your Website

Your website speed is an important ranking factor for search engines as it directly impacts the user experience. A faster website equals a better user experience. On the other hand, slower pages increase the bounce rate.

For instance, if your web page load time goes from one to ten seconds, the chances of bounce rate increases by 123%. A higher bounce rate on your website signals search engines that visitors don’t find the required information on your site. This may hurt your SEO efforts.

Taking the context of crawl budget optimisation, if your website loads faster, the crawlers can scan through more pages in less time.

As a result, more content from your website gets indexed and ranked if it answers the search queries.

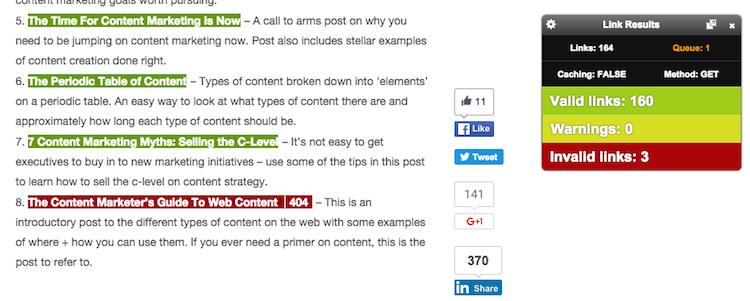

Fix Broken Links

Broken links or dead links are the internal or external web pages that you linked once but they don’t exist anymore. Hence, the server returns a 404 error message.

Since broken links are live on your website, bots scan these links using your crawl budget. But you don’t gain anything from it.

So, here’s what you need to do:

- Find the broken links on your website using a broken link checker tool such as ahrefs or Check My Links.

- Let’s take Check My Links for instance. Once you enter the domain or URL, it will scan your web page and highlight all the broken links in red.

- Then replace broken links with other relevant links or remove them.

Thus, restoring the broken links can save your crawl budget from being wasted on redundant pages. Moreover, dead links are annoying for your users. So search engines also recommend fixing such links.

Use Hreflang Tags

Hreflang tags are a way to handle SEO issues for websites with pages in multiple languages. You can inform search engines about the connection between the different regional versions of a page so that you can target the language and the region in the same tag.

This is how the hreflang tag looks:

<link rel=”alternate” href=”https://example.com” hreflang=”en-uk” />

This link is targeted towards English speakers in the UK. If you want to target English speakers globally, remove the region code in the end.

Similarly, you can apply tags for the languages and the regions you are targeting.

Solve Crawling Issues

You can increase your crawl budget automatically by fixing the crawl errors. Google categorises crawl errors into two groups:

Site Errors

Having site errors mean Googlebot is unable to crawl your entire site. The common site errors are:

- DNS error: Domain Name System (DNS) error occurs when Googlebot fails to connect with your domain.

- Server error: Server errors mean that the bot could connect to your domain but can’t load the pages.

- Robots error: When Googlebot is not able to retrieve your Robot.txt files, it’s called robot failure.

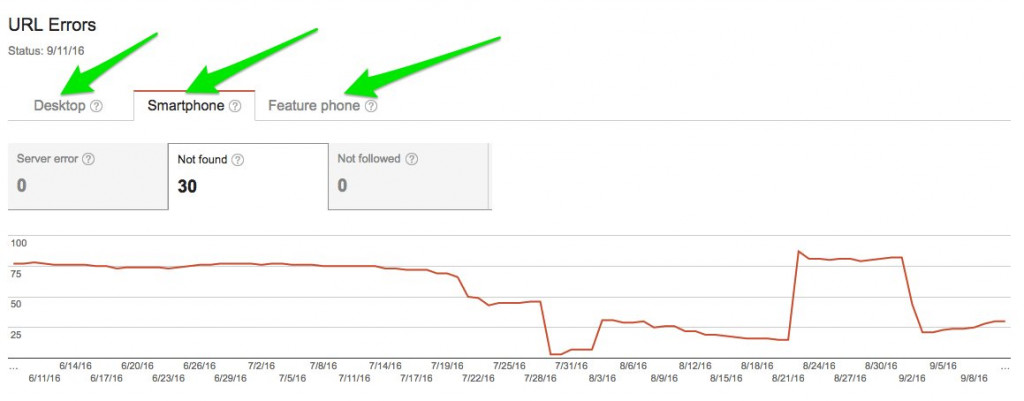

URL Errors>

URL errors are limited to specific pages and are easier to solve. These errors include 404 & soft 404 errors or access denied errors (Googlebot is blocked from accessing the page).

You can locate URL errors for various categories in your Google Search Console.

You need to conduct a periodic SEO audit of your website to find and fix the crawl errors to optimise your crawl budget.

Final Thoughts

Search engines treat users as their topmost priority. So anything that improves the usability of your site is a value addition for SEO and so for the crawl budget. Similarly, whatever makes things difficult for your visitors hinders your SEO performance.

However, every little improvement counts when it comes to optimising the crawl budget of your site. So keep a tab on factors like duplicate & thin content, soft errors, and redirected links that waste your crawl budget.

Similarly, follow the practices that optimise your crawl budget. These practices include building a user & SEO-friendly website, solving crawl errors, improving your link profile etc. Also, keep checking your crawl stats report frequently to get the latest insights into your crawl budget.

If you want some help with optimizing the crawl budget for your website, you can get in touch with the leading SEO agency in Melbourne.

Industries We Work With

Businesses Just Like Yours.

- Accountants SEO

- Asbestos Removal SEO

- Blinds & Shutters SEO

- Cosmetic Physician SEO

- Doctors SEO

- Financial Services SEO

- Flooring Companies SEO

- Healthcare SEO

- Heating & Cooling (HVAC) SEO

- Hotel & Accommodation SEO

- Laser Clinic SEO

- Locksmith SEO

- Office Fitout SEO

- Optometrists SEO

- Real Estate SEO

- Security Companies SEO

- Windows & Doors SEO

- Mechanic SEO

- Electrician SEO

- Lawyers SEO

- Fashion SEO

- SAAS SEO

- Dentist SEO

- Plumber SEO

- Florist SEO

- Nonprofit SEO

- Solar Installers SEO

- Kitchen Renovation SEO

- NDIS SEO

- Removalists SEO

- We Work With all business get in touch

Platform We Work With

Your SEO Campaign

DIGITAL MARKETING FOR ALL OF AUSTRALIA

- SEO AgencyMelbourne

- SEO AgencySydney

- SEO AgencyBrisbane

- SEO AgencyAdelaide

- SEO AgencyPerth

- SEO AgencyCanberra

- SEO AgencyHobart

- SEO AgencyDarwin

- SEO AgencyGold Coast

- We work with all businesses across Australia

Our Online Marketing Tools

and help you achieve online success.